Case #1: Visualizing the Past

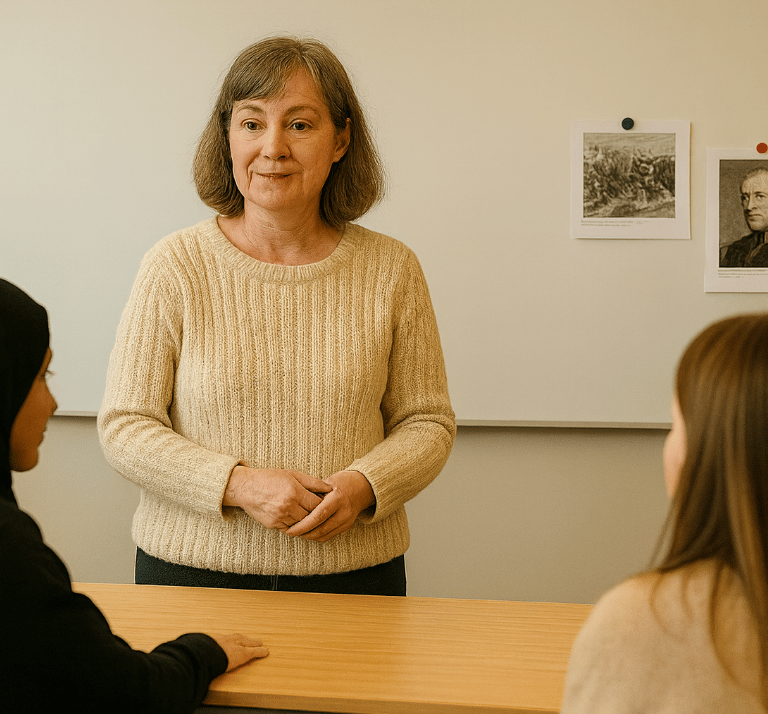

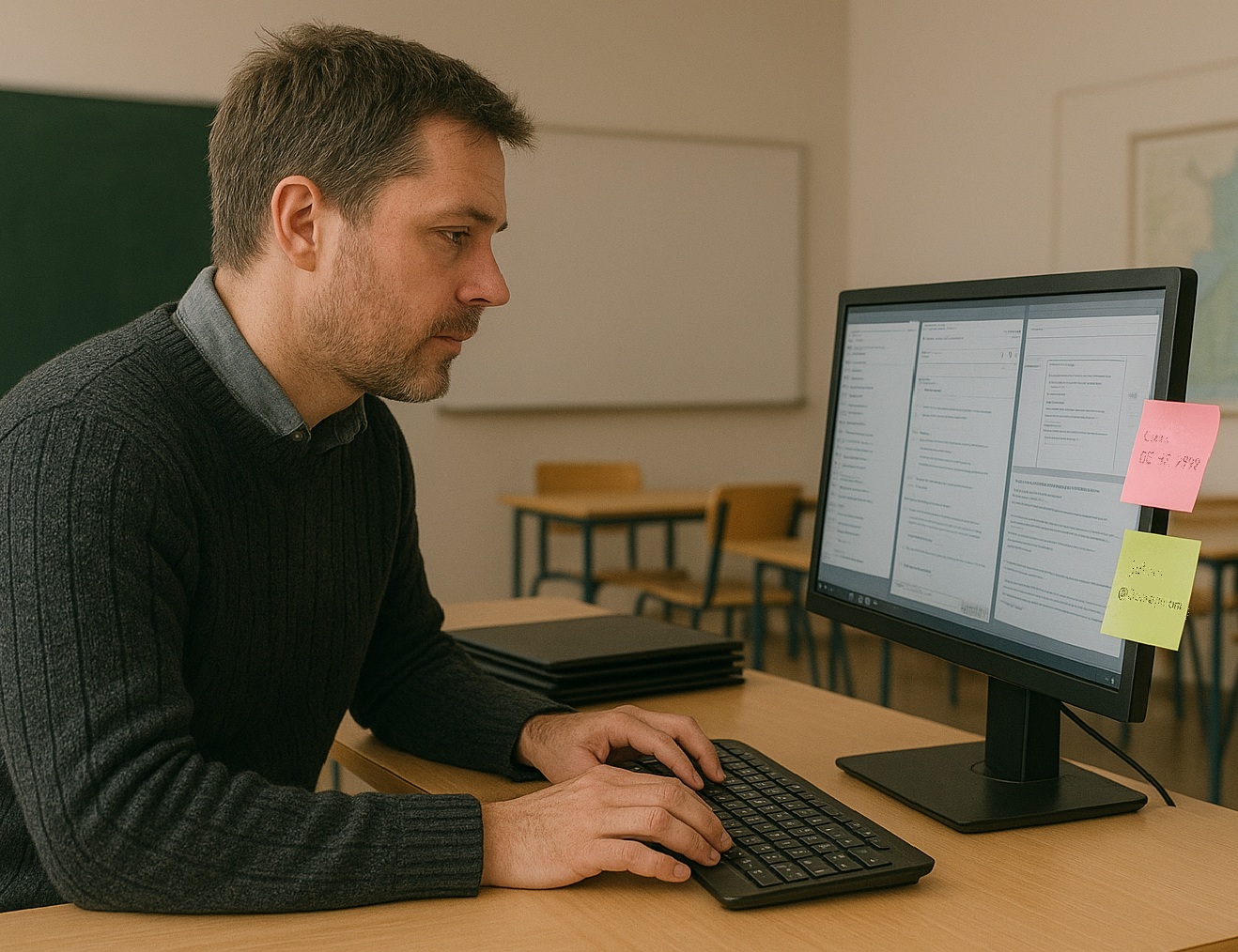

Card 1: Teacher Information

Veera is a classroom teacher working at a primary school in Finland. Since Finnish education emphasizes inquiry-based learning and critical thinking, she wants her students to actively analyze historical concepts rather than simply memorizing the information during her Environmental Studies lessons.

To engage her students, Veera decides to use historical images as primary sources and have students generate their own AI-created interpretations of the historical concepts such as "past vs. present".

Card 2: Instructional Use

Veera implements her lesson by:

-Step 1: Showing students a real historical painting depicting a village in the past and asking them to identify historical details (e.g., clothing, buildings, daily life).

-Step 2: Dividing the students into small groups and instructing them to use DALL-E (an AI-powered image generation tool in ChatGPT) to create an image that represents a village in the past.

She guides them with prompts like "Generate an image of a village in the past."

She also shows them an example video of how DALL-E can be used to create images (https://www.youtube.com/watch?v=J683kmIfI5s).

-Step 3: Having the students compare AI-Generated vs. Real Historical Sources

Once the AI images are generated, students compare them with the original historical painting and realize the inaccuracies between the real historical painting and AI-generated images.

Card 3: Solution

✅ To encourage students to think critically about AI-generated images vs. real historical sources, Veera facilitates a classroom discussion by asking:

What looks different in the AI-generated image and the real historical painting? Why do you think this happened?

Do you think AI understands history, or is it just guessing based on existing images?

How can we check if a picture really shows how things looked in the past?

If AI gets history wrong, who is responsible for correcting it?

Card 4: User Reflection

Imagine you are Veera.

🔹 What would you do in this case?

🔹 What would you suggest to Veera to help students critically evaluate AI-generated content, illustrating historical concepts such as "the past"?

👉 Write your reflection in the text box below. Your insights will contribute to a shared discussion on the responsible use of AI in education.

Case #2: Vocabulary Development in a Multilingual Classrooms

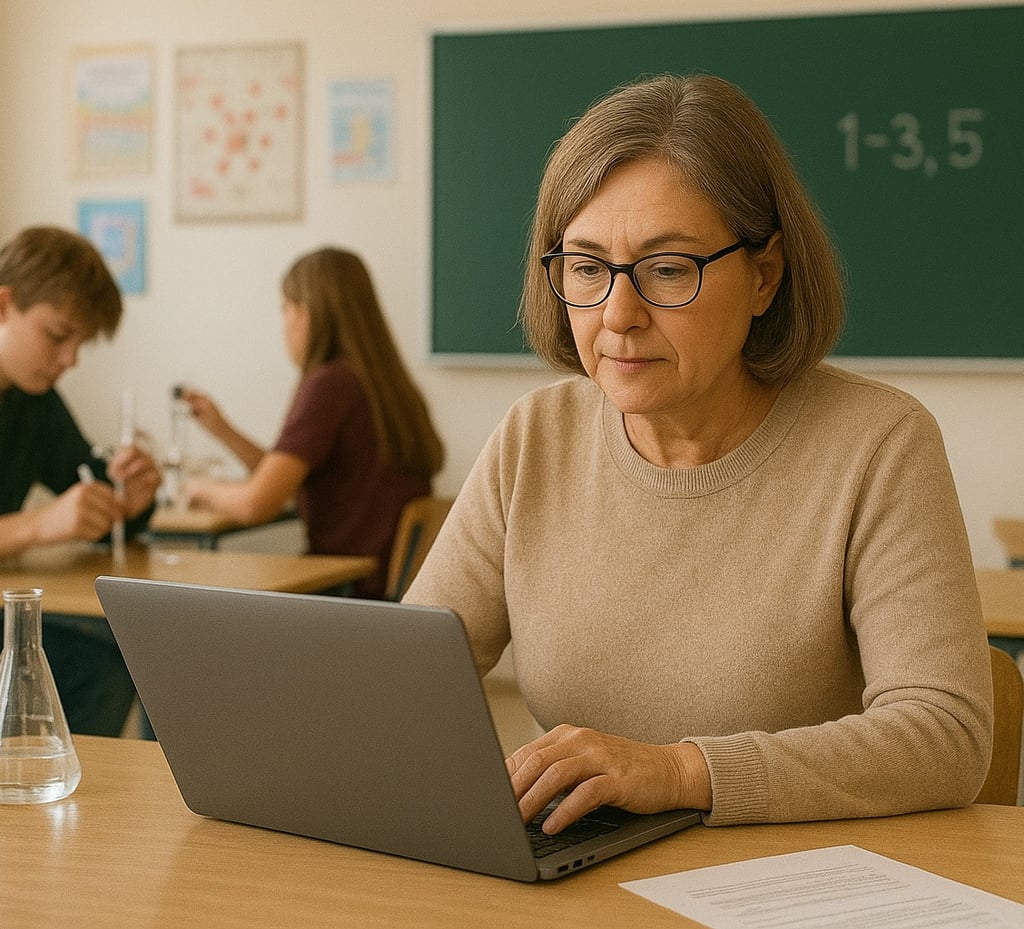

Card 1: Teacher Information

Minna is a 1st-grade teacher with 3 years of experience, working at a Finnish primary school. She teaches in a linguistically diverse classroom. Some of her 1st graders are Finnish-speaking, while others are learning Finnish as a second language. To support vocabulary learning for all learners, she decides to use GrammarlyGO (an AI tool that helps simplify and rephrase text) to generate age-appropriate definitions and example sentences for new words they encounter in class.

She prompts the tool by typing:

"Give me a simple explanation and an example sentence for the word 'metsä' (forest) for a 1st grader."

The tool produces examples like:

"A forest is a big area with many trees. We went hiking in the forest."

Minna prints these out and uses them as support cards for both native and non-native speakers.

Card 2: Ethical Challenge

Minna begins to notice:

The AI-generated vocabulary support often favors native Finnish speakers.

Some of the definitions and example sentences include vocabulary or contexts that assume fluency, making them difficult for second-language learners to understand.

Additionally, the examples sometimes reflect only majority culture experiences, leaving out diverse perspectives found in her classroom.

Despite using simplified prompts, the tool does not adjust its output based on the varying needs of her students. While the AI appears neutral, Minna realizes it may unintentionally reinforce inequity by offering more accessible support to some students than others.

She asks herself: ''Can I continue using this tool if it benefits certain learners more than others and fails to promote equal learning opportunities for all?''

Card 3: Possible Solutions

Imagine you are Minna.

🔹 What would you do in this case?

🔹 What would you suggest to the teacher?

👉 Select from the box(es) below. Your insights will contribute to a shared discussion on the responsible and ethical use of AI in education

Multiple Choice Answers:

A: Test the AI outputs with both native and non-native speakers to check if they support all learners equally.

B: Use the same AI-generated content for all students to maintain consistency, even if it’s harder for some.

C: Adjust prompts and add teacher support where necessary to ensure that explanations are clear and inclusive.

D: Talk with colleagues about the possible strategies on how AI can be used fairly to support students from different language backgrounds.

Case #3: Curriculum Design in Music Education

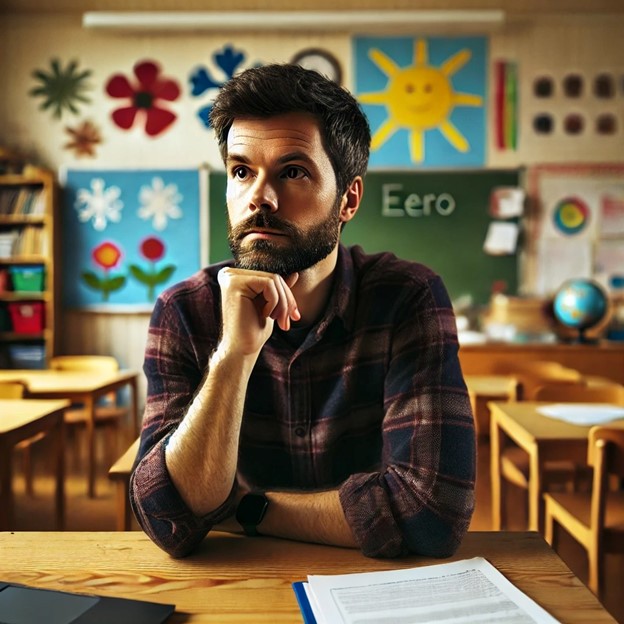

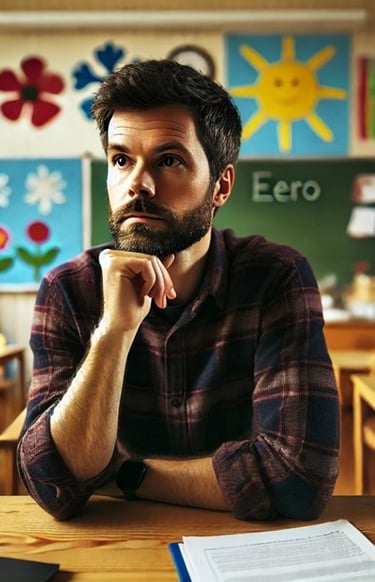

Card 1: Teacher Information

Matias is a classroom teacher in a Finnish primary school, teaching music education. This year, he has been asked to design a new curriculum module for his students, aligning with the Finnish National Core Curriculum. He wants the curriculum to reflect student-centered and hands-on learning, as emphasized in Finnish education.

To make the process more efficient, Matias decides to use Eduaide AI (an AI-powered tool for curriculum design and lesson planning) to generate a structured curriculum module outline.

Card 2: Instructional Use

Matias prompts Eduaide AI:

"Generate a curriculum module for primary school music education in Finland, including key objectives, activities, and assessments."

While the AI-generated curriculum provides a well-structured plan, Matias quickly identifies several issues:

The content lacks adaptability. It focuses on predefined lesson sequences and standardized activities, leaving little room for improvisation, group collaboration, or student-driven musical exploration, which are key aspects of Finland's music education approach.

The assessment methods are too traditional. The AI prioritizes technical skill evaluation over student-led musical exploration, limiting opportunities for creative experimentation with instruments and sounds.

Matias realizes that while AI provides a helpful starting point, it does not fully capture the Finnish educational approach.

Card 3: Possible Solution

Matias modifies his prompt to be more specific:

"Generate a curriculum module for primary school music education in Finland that emphasizes student-led exploration, improvisation, and group collaboration."

This improves the AI-generated output, but Matias knows further refinement is needed.

✅ To ensure the curriculum aligns with national guidelines, Matias:

Reviews the ´Finnish National Agency for Education´ curriculum to verify the AI-generated learning objectives and teaching strategies.

Consulting colleagues for insights on designing student-led learning experiences.

Card 4: User Reflection

Imagine you are Matias.

🔹 What would you do in this case?

🔹 What would you suggest to Matias to enhance his AI-assisted curriculum planning and ensure better alignment with the Finnish National Education guidelines?

👉 Write your reflection in the text box below. Your insights will contribute to a shared discussion on the responsible use of AI in education.

Case #4: Math Feedback and Scoring

Card 1: Teacher Information

Sari is a 2nd-grade teacher, working at a Finnish primary school. She teaches early math concepts such as counting, recognizing patterns, and solving simple addition problems.

To support independent practice, she introduces Khan Academy Kids (an AI-powered learning app that uses adaptive learning technology to personalize tasks and give instant feedback to learners).

Students solve simple math problems about those early math concepts using tablets. The app automatically adjusts the difficulty level and shows progress with stars, happy faces, or "level up" sounds. Sari uses the app's built-in analytics to check how each student is doing.

Card 2: Ethical Challenge

At first, the tool seems fun and engaging but Sari soon notices:

Some students who struggle with reading instructions or navigating the tablet get lower scores, even though they understand the math

The app rewards speed and accuracy, which can unfairly favor quick, confident users

Learners who use assistive features like audio support may progress more slowly, causing them to appear "behind" in the analytics

Students start comparing their "levels," leading some to feel discouraged

Sari later realizes that the AI-based tool:

Doesn't clearly account for diverse learning needs

May unintentionally disadvantage certain students, even if the content is well-designed

Appears neutral but may reproduce classroom inequalities through subtle scoring biases

She asks herself: Can I use AI-generated scores or feedback if they favor some learners over others?

Card 3: Possible Solutions

Imagine you are Sari.

🔹 What would you do in this case?

🔹 What would you suggest to the teacher?

👉 Select from the box(es) below. Your insights will contribute to a shared discussion on the responsible and ethical use of AI in education

Multiple Choice Answers:

A: Use the AI scores as the main basis for evaluating students, since it ensures objectivity.

B: Check how the tool scores performance and whether it accounts for differences in reading speed or support needs.

C: Combine AI feedback with your own observations to better understand each student’s abilities and progress.

D: Give alternative tasks or formats for students who need more time or support, ensuring they can demonstrate learning without pressure.

Case #5: Activity Questions for Exploring Materials

Card 1: Teacher Information

Veli is a primary school teacher with 12 years of teaching experience. He is teaching 2nd graders, and the topic is exploring materials and their properties (e.g., soft, hard, wet, dry). Next week, he is going to prepare a play-based assessment on this topic.

Since he has limited time, he decides to use ChatGPT to generate simple observation-based or hands-on activity questions. He asks for interactive assessment ideas, such as sorting objects by texture, identifying materials in daily life, or discussing how water changes when it gets cold or warm.

Card 2: Instructional Use

Veli prompts ChatGPT with:

"Generate interactive, play-based assessment activities for 2nd graders on exploring materials and their properties (e.g., soft/hard, wet/dry, and how water changes when heated or cooled)."

After reviewing the output, he identifies two issues:

Some suggested activities are too abstract for young learners, requiring explanations beyond their developmental level.

A few activities do not align with hands-on, sensory exploration, relying too much on verbal questioning rather than real-world observation and play.

Card 3: Possible Solution

✅ To improve the quality of his assessment activities, Veli decides to try an alternative AI tool:

He generates a new set of interactive, hands-on activity ideas using Google Gemini after watching a short video about how to use it.

He compares the suggestions from ChatGPT and Gemini, evaluating which aligns best with student-centered, play-based learning.

He selects the most engaging and developmentally appropriate activities, refining them to create a balanced, exploratory assessment that encourages observation, discussion, and real-world connections.

Card 4: User Reflection

Imagine you are Veli.

🔹 What would you do in this case?

🔹 What would you suggest to Veli to appropriately use AI for assessment, which is engaging, age-appropriate, and accurate?

Case #6: Seasons and Weather

Card 1: Teacher Information

Eero is a second-grade teacher at a Finnish primary school with 8 years of teaching experience. He is teaching a unit on seasons and weather to his students within the scope of ´Environmental Studies´ class.

To create more personalized content and save time, he decides to use Microsoft Copilot to help generate weather-related worksheets and visual materials for his students.

He types prompts like:

"Create a worksheet for 2nd graders about spring weather in Finland."

"Make a fun quiz about the four seasons for early primary students."

Card 2: Ethical Challenge

The AI generates fast, polished materials, but Eero notices some odd or inaccurate phrases-such as describing the Finnish summer as "extremely hot," or including weather examples from other continents with no clear relevance.

Eero feels that the developers of Copilot should be accountable for such mistakes in educational contexts. However, he soon realizes:

There is very little information about who actually built the tool.

He doesn't know how Microsoft Copilot creates content.

The tool seems to rely on general web data, not Finland-specific educational sources.

Later, Eero raises a concern about accountability and finds himself asking: ´´Is it responsible to use content generated by an AI tool when the developers and their educational values are unknown?´´

Card 3: Possible Solutions

Imagine you are Eero.

🔹 What would you do in this case?

🔹 What would you suggest to the teacher?

👉 Select from the box(es) below. Your insights will contribute to a shared discussion on the responsible and ethical use of AI in education

Multiple Choice Answers:

A: Research Microsoft Copilot’s documentation to understand how the tool generates content and what data sources it uses.

B: Trust the tool’s generated content as-is because it was developed by a well-known tech company.

C: Consult your school’s digital learning coordinator to assess whether the tool is suitable for early primary education.

D: Use Copilot only for drafting ideas and revise or replace content to ensure it aligns with the curriculum.

Case #7: AI and Speech Writing

Slide 1: Introducing Context and Challenge

Susanna, a Finnish language and literature teacher in a lower secondary school, assigns a traditional task: each 9th grader writes a farewell speech as they finish school.

When the speeches are submitted, Susanna realizes that many students have used generative AI to write the entire text. The speeches sound polished but lack personal voice and authenticity. Susanna wonders: How can I ensure the students learn how to write a speech that reflects their own experiences?

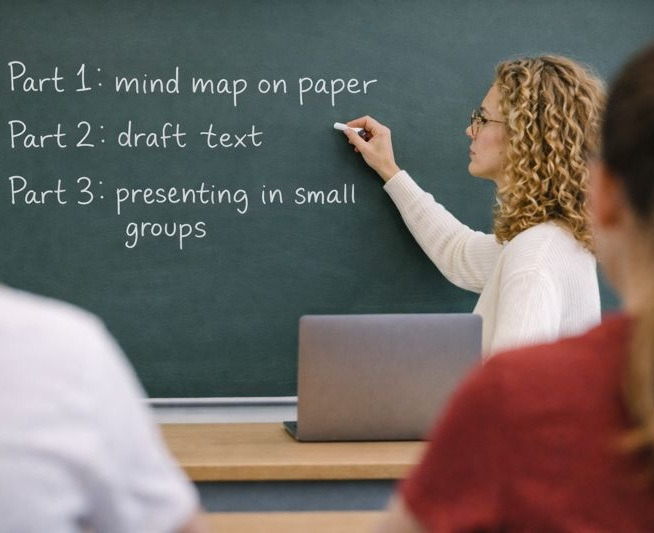

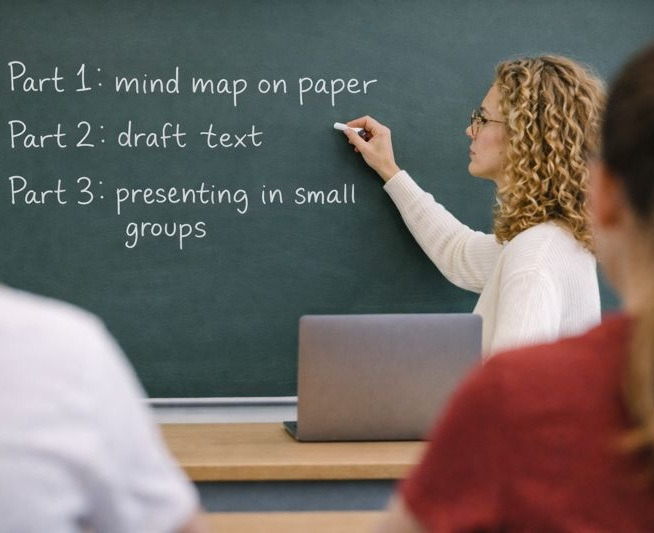

Slide 2: Teacher’s Solution

Susanna decides to keep the AI option but adds a new requirement. Every speech must include personal experiences and memories from the student’s school years.

She explains that the goal is to make the speech meaningful and unique, something only the student can write.

Susanna also models this by sharing examples of authentic memories and discussing why they matter in a farewell speech.

Slide 3: User Reflection

Imagine you are Susanna.

🔹 What would you do in this situation?

🔹 How can teachers design assignments so that AI supports learning without replacing students’ personal voice and experiences?

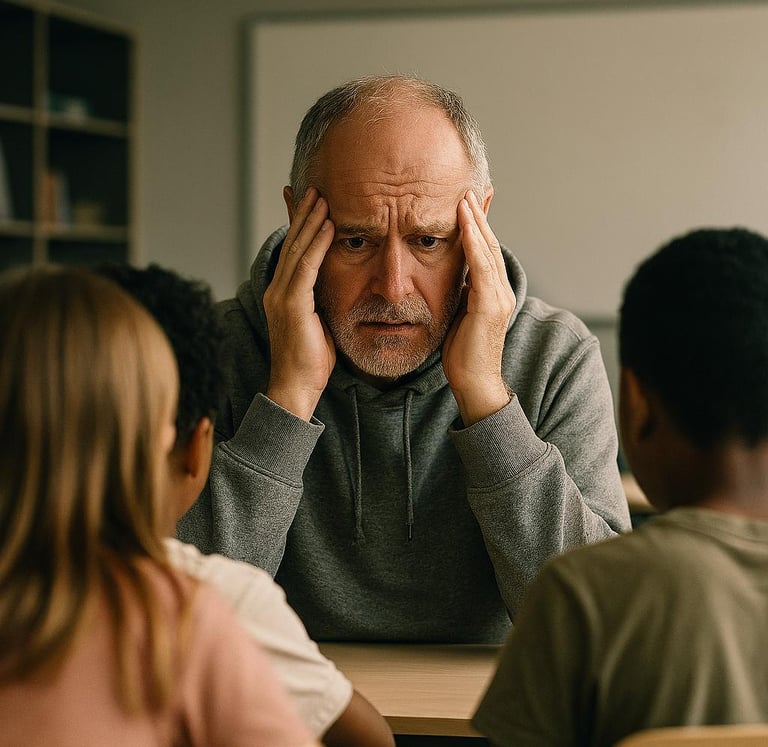

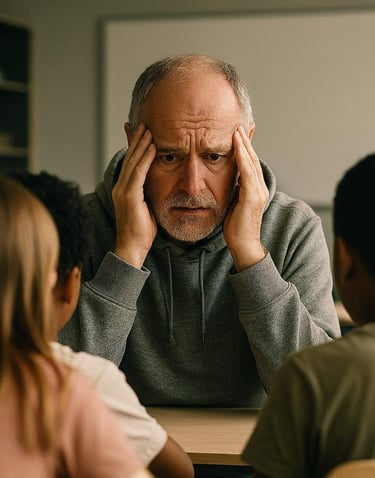

Case #8: Technostress about AI

Slide 1: Introducing Context and Challenge

Jukka, a primary school teacher in a combined 3rd to 4th grade class, notices that his school is encouraging teachers to integrate generative AI tools into lessons. He sees colleagues experimenting with AI for lesson planning and creative writing tasks.

Jukka feels technostress:

He doesn’t fully understand the pedagogical benefits of AI.

He worries about falling behind professionally if he doesn’t adopt these tools.

The rapid pace of change and lack of clear guidance make him anxious: he is not sure what his role is when AI seems to do so much.

Slide 2: Teacher’s Solution

Instead of rushing into full adoption, Jukka aims to gain a sense of control and professional agency by the next actions. He…

Starts small: He chooses one simple AI-supported activity (e.g., generating vocabulary lists for Finnish language lessons).

Seeks support: Talks to his teaching partner, who is a more active user of generative AI in teaching, and shares his concerns.

Focuses on pedagogy first: Learns about how AI can support creativity and differentiation without replacing core teaching practices or the personal connection he has with the children in his class.

Advocates for training: Suggests to the school leadership that professional development sessions on AI literacy and ethics be organized.

Slide 3: User Reflection

Imagine you are Jukka.

🔹 What would you do in this situation?

🔹 How can the school support teachers in adopting AI without creating pressure or compromising pedagogical integrity?

Case #9: AI-Generated Exam

Slide 1: Introducing Context and Challenge

Lilja, a history teacher at a lower secondary school (yläkoulu), decides to experiment with generative AI to create a full exam on Finnish history. She hopes it will save time and make the exam more engaging.

When students take the exam, they notice that several questions and answer options don’t match what their textbooks contain. They raise concerns about the reliability of the exam and fairness of assessment. Later, Lilja’s colleague questions if the exam allows her to assess the students following the guidance of the Finnish National Core Curriculum and the local curriculum.

Slide 2: Teacher's Solution

Lilja acknowledges both the students’ concerns and her colleague’s observation. She realizes that the AI-generated exam may not fully reflect the curriculum or the textbooks used in class.

She decides to revise her practices by:

Cross-checking AI-generated questions against the curriculum and the used teaching material.

Keeping some AI-created questions but reframing them as supplementary or enrichment tasks to encourage critical thinking and comparison.

Explaining to the students how AI has been used and answering their concerns.

Lilja also uses this as a teaching moment: she discusses with her students how AI can produce useful but sometimes unreliable information, and why source evaluation is essential in both academic work and everyday life.

Slide 3: User Reflection

🔹Imagine you are Lilja. What would you do in this situation?

🔹How can teachers balance the efficiency of AI tools with the responsibility to ensure that assessment reflects the curriculum and supports student learning?

Case #10: Unequal Access to AI Tools

Slide 1: Introducing Context and Challenge

Olivia is a primary school teacher who also teaches music in grades 7–9. She is eager to explore generative AI to help plan lessons and create especially music-related materials.

She discovers that some of her subject teacher colleagues have access to a paid version of a generative AI tool. Olivia, however, was never informed about the pilot project through which these accounts were distributed.

When Olivia tries to use the free version, she quickly runs into limitations, such as restricted output length and fewer customization options. This makes her feel disadvantaged compared to her colleagues despite her own readiness to make use of the tools. Olivia feels frustrated by the situation.

Slide 2: Teacher's Solution

Olivia raises the issue with her principal, explaining how unequal access to AI tools can affect both her workload and the quality of materials she can provide to students.

Together, they agree on a plan:

The principal commits to greater transparency about pilot projects and resource distribution.

Olivia is invited to join the next phase of the pilot, ensuring she has equal access to the paid version.

In the meantime, Olivia adapts by combining the free AI tool with traditional resources, as well as collaborating with the other music teacher who has the full access.

Slide 3: User Reflection

🔹Imagine you are Olivia. What would you do in this situation?

🔹How can schools ensure fairness and transparency when introducing new digital tools like AI?

Case #11: Hesitation to Use AI in Teaching

Slide 1: Introducing Context and Challenge

Juho, a subject teacher at a lower secondary school, has not yet used AI in his teaching. He feels he lacks the time to explore it properly. He doesn’t want to jump in without preparation, and would prefer to receive some training before integrating AI into his lessons.

Juho often thinks about using AI and sees potential value in it but hesitates because neither he nor his students know how to use it effectively. However, he notices that some students already use AI to complete textbook tasks. In class discussions, Juho observes that while some students can critically evaluate AI-generated answers and spot mistakes, others accept the responses uncritically, which raises concerns about uneven digital literacy.

Slide 2: Teacher's Solution

Juho decides to take a gradual approach:

He begins by openly discussing AI use with his students, emphasizing both its possibilities and its limitations.

Instead of redesigning assignments immediately, he uses class discussions to highlight examples where AI answers are correct and where they fall short, modeling critical thinking.

Juho also raises the issue with the school’s technology team, suggesting that teachers receive training on AI tools so they can guide students more effectively.

This way, Juho acknowledges the reality of AI use among students while preparing himself and his school community for more structured integration in the future.

Slide 3: User Reflection

🔹Imagine you are Jukka. What would you do in this situation?

🔹How can teachers support students in developing critical thinking about AI-generated content, even if the teachers themselves do not feel fully equipped to integrate AI to their work?

Case #12: Bullying with AI

Slide 1: Introducing Context and Challenge

Silva, a primary school teacher, introduces an AI writing tool to help her students create imaginative stories in Finnish. The activity is meant to boost creativity and enrich language skills.

However, during the lesson, Silva notices a group of students laughing at an AI-generated story that includes a classmate’s name in a negative way. They had prompted the tool to “write a silly story about [classmate’s name] being the worst student.” When confronted, they claim it is only harmless fun.

Silva feels concerned: How should she respond without banning AI completely? What boundaries should she set?

Slide 2: Teacher's Solution

Silva pauses the activity and gathers the class for a discussion about responsible AI use. Together, they create a Classroom AI Code of Conduct, which includes rules like:

No using AI to create content about real people.

Always show respect and empathy in prompts.

Silva also plans a short lesson on digital citizenship and empathy, connecting it to the Finnish curriculum’s transversal competence areas (for example ICT competence).

Slide 3: User Reflection

🔹Imagine you are Silva. What would you do in this situation?

🔹What kind of ethical responsibility must be taught with AI use?

Case #13: Compiling Dietary Restrictions Information with AI

Slide 1: Introducing Context and Challenge

Principal Aino is organizing a school trip and orders snacks. She already has allergy and dietary information for each class, but the data is scattered across multiple places. To make ordering easier, Aino wants to quickly combine the total number of students who have certain dietary restrictions.

She decides to use an AI tool to query and aggregate this information from the school’s existing database.

Aino faces several concerns:

Data privacy: The database contains sensitive health information.

Accuracy: Will the AI correctly interpret different formats and notes in the records?

Responsibility: If the AI miscalculates and every student does not have a suitable snack, who is accountable?

Slide 2: Principal's Solution

Aino is aware of the risks.

She uses the AI tool only to generate total numbers, not handling individual student details.

She verifies the AI’s output by manually checking a sample of records.

She documents the process and informs staff for transparency.

She consults the municipality’s data protection officer to confirm her judgement.

This way, Aino creates a time-saving solution that can be also used in the future while maintaining ethical and legal standards.

Slide 3: User Reflection

🔹Imagine you are Aino. What would you do in this situation?

🔹How can school leaders balance efficiency and data privacy when using AI for administrative tasks?

Case #14: AI-generated Technology Instructions

Slide 1: Introducing Context and Challenge

Johan enjoys how AI reduces time spent on keeping up with technology that is part of his work as a teacher. He often asks AI for help with tasks like converting files into different formats. Johan feels that AI can be an “interpreter” between technology and users who are “not tech people.”

One day, Johan needs to upload student assignments to a new learning platform. He asks AI for step-by-step instructions. The AI provides clear guidance—but Johan later realizes the platform requires changing privacy settings or it will make everything uploaded accessible to all users. It is only good luck that Johan did not accidentally make students’ personal information public on the platform. The AI never mentioned these privacy settings’ existence.

Now Johan wonders:

Can he trust AI instructions?

Who is responsible if student data is exposed because of incomplete AI guidance?

Slide 2: Teacher's Solution

Johan decides to continue using AI for general ICT guidance, but makes changes to his practices. He plans to:

Double-check instructions with official platform user guide and school’s instructions.

Ask the school’s IT support to give a green light for his actions when handling files with personal information.

Share his experience with colleagues to raise awareness about AI’s limitations.

Slide 3: User Reflection

🔹Imagine you are Johan. What would you do in this situation?

🔹How can teachers balance the convenience of AI assistance with the need for accurate instructions?

Case #15: Role of AI in Home-School Communication

Slide 1: Introducing Context and Challenge

Liina, a classroom teacher, faces an unexpected situation. A parent messages her about how their child will be affected by the renewal of individualized pedagogical support system. The parent confidently states that AI has explained how the renewal process works – presenting this as factual information.

However, at this time, no official information has been published by the school or municipality. Liina feels surprised and taken aback:

Her professional understanding of education systems is questioned.

AI is positioned as the ultimate authority, overshadowing her expertise.

She worries about misinformation spreading among parents.

Slide 2: Teacher's Solution

Liina decides to:

Maintain a professional and neutral tone in communication, focusing on verified information only.

Avoid debating AI-generated claims directly.

Suggest that parents rely on official school channels for accurate updates.

Share the incident with staff to develop a common strategy for handling AI-related misinformation in parent communication.

Slide 3: User Reflection

🔹Imagine you are Liina. What would you do in this situation?

🔹How can teachers prepare themselves to address situations where parents present AI-generated content as facts?

Case #16: Adjusting Teaching for Students AI-Competency

Slide 1: Introducing Context and Challenge

Leena, a physics and chemistry teacher, is teaching 7th and 8th graders for the first time. She assumes they know how to use AI responsibly and critically, like her students in upper secondary school did – but quickly realizes that these younger students are not at the same level.

Students often:

Copy AI-generated answers without checking accuracy.

Use AI for homework without understanding the concepts.

Struggle to evaluate whether AI’s explanations are correct or relevant.

Leena feels challenged to guide her students toward critical AI use. She also wonders how to adjust her tasks so they can’t be completed by simply pasting AI answers.

Slide 3: User Reflection

🔹Imagine you are Leena. What would you do in this situation?

🔹What factors into students' AI competency at different ages?

Case #17: Cheating with AI – Assessment Challenge

Slide 1: Introducing Context and Challenge

Marja, a history teacher, believes that students have always found ways to cheat. They have been copying from friends, using textbooks during tests and finding answers online. So when AI tools became popular, she assumed they wouldn’t make a big difference.

However, Marja begins to notice something new:

AI doesn’t just provide answers—it creates entire essays, explanations, and arguments instantly.

Students can submit work that looks polished and original, making plagiarism detection harder.

Unlike copying from peers, AI-generated work can bypass the teacher’s ability to see gaps in understanding.

Marja realizes that AI can increase the scale and invisibility of cheating.

Slide 2: Teacher's Solution

Leena starts by changing what tasks she assigns as homework. Now they will require personal input and cannot be fully done by AI. Students will for example take their own photos of experiments or everyday examples of physical phenomena. They need to include short reflections on what they observed and why it relates to the topic of teaching.

She decides that tasks most vulnerable to AI misuse, like simple written question-and-answer tasks will be done in class under her supervision. She is willing to change this once her students have a better understanding of how to use AI responsibly.

Additionally, she introduces AI literacy mini-lessons, teaching students how to question AI outputs and verify information.

Slide 2: Teacher's Solution

Marja talks with her colleagues and asks for help. She decides to:

Shift from purely written assignments to process-based tasks, where students show drafts, reasoning steps, and reflections.

Include oral explanations or quick in-class checks to confirm understanding.

This approach helps her maintain fairness and authenticity in assessment. She also begins to teach students about academic integrity in the age of AI, emphasizing why learning matters beyond producing answers.

Slide 3: User Reflection

🔹Imagine you are Marja. What would you do in this situation?

🔹What kind of a reasoning can teachers give to students to explain why AI-generated work is not sufficient at school?

Case #18: AI Misinterprets Sports Tests

Slide 1: Introducing Context and Challenge

Sami teaches physical education and decides to use AI to generate personalized feedback for students based on their sports test results. The goal is to save time and provide clear, motivating feedback.

The feedback looks encouraging and good at first. However, Sami notices an issue:

AI interprets some results exactly opposite to the correct meaning (e.g., a low score is described as excellent performance).

If shared without checking, this could mislead and confuse students and parents.

Slide 2: Teacher's Solution

Sami decides to:

Carefully review all AI-generated feedback before sharing it with students.

Use prompt refinement to clarify how AI should interpret the data (e.g., “Lower scores indicate weaker performance in this area – give improvement tips accordingly”).

Document the process and share best practices with colleagues to prevent similar mistakes.

Treat AI as a drafting tool, not an authority—human judgment remains essential.

Slide 3: User Reflection

🔹Imagine you are Sami. What would you do in this situation?

🔹What opportunities and challenges are involved in motivating students with AI?

Case #19: Judging AI Misuse

Slide 1: Introducing Context and Challenge

Riikka, a language teacher, has started noticing student work where AI misuse seems to be present. Some work seem too polished and advanced compared to the students’ usual performance.

She tries using AI detection tools, but they give inconsistent and unreliable results. Sometimes they flag genuine work as AI-generated, and other times they miss obvious AI-written content.

Riikka realizes that she has no reliable way to judge AI misuse after the work is handed in. This raises concerns about fairness, assessment validity, and trust between teacher and students.

Slide 2: Teacher's Solution

Riikka figures out that the learning environment and tasks need to be planned with consideration for AI use. She decides to:

Shift focus from detection to prevention by designing tasks that require personal engagement (e.g., in-class writing, oral presentations, drafts showing thought process).

Include process-based assessment, where students submit outlines, notes, and reflections alongside final work.

Discuss openly with students about ethical AI use.

Use AI detection tools only as a secondary check, never as the sole basis for judgment.

Slide 3: User Reflection

🔹Imagine you are Riikka. What would you do in this situation?

🔹Why are detection tools offered?

Case #20: Personal Voice in Home-School Communication

Slide 1: Introducing Context and Challenge

Roni, a preschool teacher, communicates frequently with children’s homes, including sending weekly letters to parents. At first, he is thrilled that AI can turn his notes into polished letters with illustrations quickly, saving time.

However, after using AI for a few weeks, Roni notices something off:

The tone of the letters feels impersonal and generic, lacking the warmth and familiarity he values in parent communication.

Parents have told him they appreciate his personal touch, and he worries that AI-generated messages might weaken trust and connection.

Slide 2: Teacher's Solution

Roni decides to:

Stop using AI for weekly letters and write them himself, ensuring they reflect his personality and care. He asks other staff to help with taking photos instead of generating illustrations with AI.

Use AI only for structuring ideas or brainstorming, not for final communication.

Slide 3: User Reflection

🔹Imagine you are Roni. What would you do in this situation?

🔹What impressions may AI-generated communication convey to homes?

🔹Which parts of home-school communication can be eased with AI?

Case #21: Efficiency vs. Copyright

Slide 1: Introducing Context and Challenge

Manna, a biology and geography teacher, needs multiple versions of the same exam because different classes will take the test on the same topics on different days and she doesn't want them sharing answers.

She is used to working with AI and thinks it could be helpful for the job. She hopes to generate alternative versions of the exam from the same material in minutes.

However, when she opens the textbook-provided exam and an AI tool, she stops. She questions if the content she is about to use in her prompting is her own or legally usable (copyright complying).

Slide 2: Teacher's Solution

Manna decides to:

Use her own original questions as the base material for AI to create variations.

Not upload copyrighted textbook content into AI tools.

Document her process to ensure transparency and compliance with school policies.

Review all AI-generated versions for correctness and difficulty level.

Slide 3: User Reflection

🔹Imagine you are Manna. What would you do in this situation?

🔹Would you know how to find out what materials can be used as input when generating AI content?

Case #22: Privacy Concerns in Using AI for CV Creation

Slide 1: Introducing Context and Challenge

Maija, a study counsellor, wants to help students prepare for working life by creating professional CVs. She considers using AI tools to make the process easier and more engaging.

However, Maija has concerns:

CVs include personal information such as names, contact details, and home addresses.

She wonders if this data is safe when entered into AI tools.

Slide 2: Teacher's Solution

Maija decides to:

Avoid entering sensitive personal data into AI tools. Instead, she uses placeholders (e.g., “Name,” “Address”) when generating CV templates.

Teach students to finalize CVs offline, adding personal details themselves.

Choose AI tools that are approved by the school or municipality and comply

Include a short privacy awareness session, explaining why personal data should not be shared with external AI services.

Slide 3: User Reflection

🔹Imagine you are Maija. What would you do in this situation?

🔹What are some other situations in schools where similar concerns are relevant?

🔹How can schools guide students to be conscious of their data privacy with AI?

Case #23: AI Support in Math

Slide 1: Introducing Context and Challenge

Milla, a math teacher, has a student who often struggles in class unless someone guides him to go through the task step by step. One day, the student tells Milla that he asked AI for help with a math problem. Milla is worried that the student has just copied the answer, but the student shows what he did.

Instead of just giving the answer, the AI provided what Milla agreed were clear, logical instructions: what to do first, what comes next, and how to check the result. The student was thrilled – he solved the problem and actually understood the process. However, many others try to use AI simply to copy the answers. This experience made Milla wonder:

Could AI become a valuable tool for scaffolding learning?

How can students learn to ask the right questions so AI gives helpful guidance?

Slide 2: Teacher's Solution

Milla decides to:

Teach students how to prompt AI effectively (e.g., “Explain step by step how to solve this equation”).

Position AI as a secondary support tool that can continue guiding after teacher’s guidance and when needed, hands-on explanations with concrete manipulatives.

Encourage students to compare AI’s instructions with their own reasoning, checking if the steps make sense.

Slide 3: User Reflection

🔹Imagine you are Milla. What would you do in this situation?

🔹When is the right time to introduce AI tools with a struggling student?

Case #23: Learning and Teaching about AI

Slide 1: Introducing Context and Challenge

Raisa, a school principal, believes that teachers must first know how to use AI before introducing it to students. However, AI tools are evolving so quickly that teachers are often learning and teaching at the same time.

This creates challenges:

Teachers feel uncertain about their own skills while guiding students.

There is little time for structured training before classroom use.

Mistakes or misunderstandings can occur, affecting trust and confidence.

Slide 2: Principal's Solution

Raisa decides to implement peer learning sessions, where teachers share experiences and tips about AI use.

She encourages a “learn together” approach—teachers openly tell students they are exploring AI too, modeling lifelong learning.

She works with the city to provide clear guidelines for safe and ethical AI use, even if technical mastery takes time.

She starts with low-risk tasks (e.g., brainstorming, idea generation) before moving to high-stakes activities like grading or assessment.

Slide 3: User Reflection

🔹Imagine you are Raisa. What would you do in this situation?

🔹How can schools create a culture where teachers feel comfortable learning and experimenting with AI alongside their students?

Case #24: AI Assistance for Specific Use

Slide 1: Introducing Context and Challenge

The school gym has a very complicated lighting system. Teachers often struggle to adjust the lights for different events. One teacher who is often in charge of adjusting the lights knows the issue well.

The system is complex and unintuitive.

The only instructions are in a 900-page manual.

General AI tools and internet searches provide no help.

Slide 2: Teachers's Solution

One teacher decides to try something new: they upload the entire manual into an AI tool and creates an AI agent that can answer questions like:

“How do I set the lights for a basketball game?”

“What options would be useful for a school play where spotlight is needed?”

The teacher documents the steps that made the approach work:

Upload the full manual into an AI tool capable of handling large documents.

Create a custom AI assistant (agent) that interprets the manual and gives step-by-step instructions for any desired effect.

Test the responses for accuracy before relying on them during events.

Share the tool with other staff.

Slide 3: User Reflection

🔹Imagine you are a teacher at this school. What would you do in this situation?

🔹What is required for the kind of solutions that this teacher came up with?

🔹What specific uses are not suitable for AI assistance?

Case #25: AI-Suggested Feedback

Slide 1: Introducing Context and Challenge

Milja, a chemistry teacher, is reviewing work plans that students have submitted on a digital platform. While browsing, an integrated AI tool automatically offers feedback ideas for each plan.

Milja reads the suggestions and finds them sensible, providing constructive points she might not have thought of immediately. This saves time and sparks new ideas for guiding students.

However, Milja reflects on some challenges:

Can she trust AI-generated feedback?

How can she ensure that feedback remains personalized and pedagogically sound, not just generic AI suggestions?

Slide 2: Teachers's Solution

Milja decides not to use AI-generated feedback directly. Instead, she:

Reads the suggestions for inspiration but writes her own feedback to maintain a personal and professional voice.

Ensures that her comments reflect each student’s unique needs.

Milja assesses that this way she benefits from AI suggestions while preserving authenticity and pedagogical integrity.

Slide 3: User Reflection

🔹Imagine you are Milja. What would you do in this situation?

🔹What risks and opportunities are present when AI suggests feedback without the teacher prompting it?